Profile

Toyota Research Institute (TRI) Robotics

Founded in 2015 as part of Toyota’s $1 billion, 5-year investment in AI and robotics, the Toyota Research Institute (TRI) has become a global leader in personal assistive robotics. Unlike Toyota’s core automotive division, TRI’s robotics arm focuses on solving societal challenges tied to aging populations, labor shortages, and hazardous environments, with a core mission to build robots that collaborate safely and intuitively with humans in unstructured real-world settings.

Core Research Focus Areas

TRI’s robotics research is organized around four interconnected pillars, all optimized for home and community environments rather than controlled factory floors:

Perception: Developing 360∘ LiDAR, RGB-D camera systems, and 3D scene understanding algorithms to help robots map dynamic spaces, recognize everyday objects, and predict human movement.

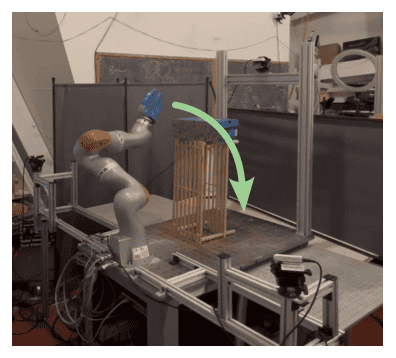

Manipulation: Advancing dexterous grasping for both rigid items (cups, tools) and deformable objects like laundry, food, and soft packaging, a longstanding unsolved challenge in commercial robotics.

Human-Robot Interaction (HRI): Building models to interpret human intent, enable natural language commands, and ensure safe, low-force physical collaboration during close-contact tasks.

Mobility: Designing omnidirectional bases and navigation systems that avoid obstacles and adapt to uneven indoor flooring, narrow hallways, and other real-world spatial constraints.

Key Robot Platforms

The most widely recognized TRI robot is the Human Support Robot (HSR), a compact mobile manipulator first unveiled in 2017. Standing ~1 meter tall, the HSR features a single 6-degree-of-freedom arm, a telescoping torso for reaching high or low surfaces, and a suite of sensors including depth cameras and force-torque sensors on its gripper to detect accidental collisions. TRI also maintains the HSR Open Platform, a low-cost, open-source version of the robot distributed to academic researchers worldwide to accelerate collaborative robotics breakthroughs.

More recent TRI prototypes include Punyo, a soft, inflatable robot designed for ultra-safe human interaction with no exposed rigid components to reduce injury risk during close collaboration. TRI has also demonstrated task-specific robots capable of complex daily living activities, including folding laundry, preparing simple meals (such as assembling peanut butter sandwiches), and retrieving dropped items for mobility-impaired users.

Toyota Cue7

The Toyota Cue 7 is the seventh and most advanced iteration of Toyota’s autonomous basketball-shooting robot series, developed by the Toyota Engineering Society (TES), a volunteer group of Toyota employees distinct from the Toyota Research Institute (TRI) that focuses on assistive robotics. First unveiled in 2024, the Cue series launched in 2018 as a passion project to test consumer-grade sensor and AI tech in high-precision, dynamic tasks.

Standing 1.9 meters tall, Cue 7 features a wheeled omnidirectional base, a humanoid upper torso with two 7-degree-of-freedom arms, and a suite of sensors including 360∘ LiDAR, stereo depth cameras, and pressure-sensitive grippers to grip and release basketballs with human-like consistency. TES volunteers spent over 10,000 hours refining its shooting form to mimic the fluid motion of professional basketball players, reducing mechanical jitter that lowered accuracy in earlier models. Its core innovation is real-time adaptive shooting: using computer vision to track hoop position, it calculates optimal shot trajectory via kinematic models:

y=y0+v0yt−21gt2

where y0 is release height, v0y is initial vertical velocity, and g is gravitational acceleration. This allows Cue 7 to adjust for distance, humidity, and minor hoop misalignment on the fly.

Cue 7 achieves 99.4% accuracy from the free-throw line, and 87% accuracy from the 3-point line, outperforming all prior Cue iterations. It set a 2024 Guinness World Record for most consecutive 3-point shots by a robot (1,247 unbroken attempts). Unlike earlier Cue models limited to stationary free throws, Cue 7 can move to any position on the court, dribble briefly, and shoot within 2 seconds of reaching a target spot.

Toyota does not plan to commercialize Cue 7; instead, the sensor fusion, real-time adjustment, and machine learning algorithms developed for the robot are applied to Toyota’s automotive advanced driver-assistance systems (ADAS) and TRI’s assistive home robots. As of April 2026, Cue 7 is used primarily for STEM outreach at Toyota facilities and public tech demonstrations globally.

Map

Sorry, no records were found. Please adjust your search criteria and try again.

Sorry, unable to load the Maps API.