Profile

NVIDIA Robotics & Edge AI for Manufacturing: Developer Resources and Industrial Strategy

Context and Purpose

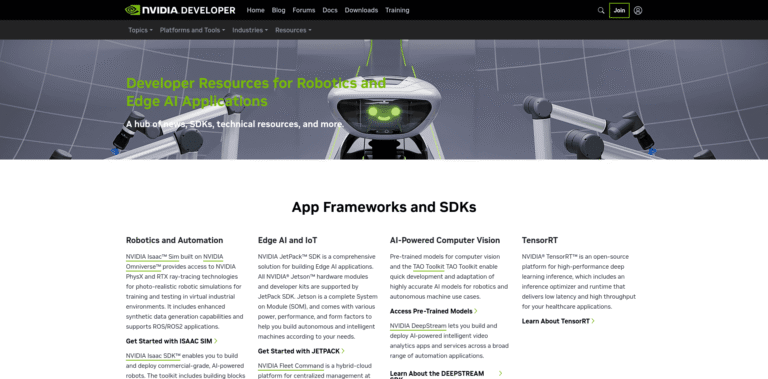

The “Robotics and Edge AI Applications” developer resources page is NVIDIA’s on‑ramp for engineers, system integrators, and manufacturers who want to move AI out of the data center and onto the factory floor. Rather than selling only chips, NVIDIA packages silicon, software libraries, reference workflows, and simulation tools into a coherent stack for autonomous mobile robots (AMRs), vision inspection, predictive maintenance, and real-time control. The goal is to compress proof‑of‑concept timelines from months to weeks while ensuring industrial-grade safety, determinism, and uptime.

The Hardware Foundation

At the core of this ecosystem are edge AI platforms built on NVIDIA GPUs and SoCs:

Jetson family (AGX Orin, Orin Nano) delivers server‑class AI performance at the edge, enabling robots to run multiple neural networks concurrently—localization, obstacle avoidance, and quality inspection—under tight power envelopes.

IGX Orin targets industrial and medical settings that require functional safety, real‑time operating system support, and hardware-enforced security, making it suitable for collaborative robots and heavy machinery.

GPU‑accelerated edge servers (e.g., EGX) aggregate streams from hundreds of cameras and sensors, performing inferencing and federated learning without sending raw data to the cloud.

Software Stack and Developer Experience

The developer portal organizes tooling around four pillars:

Isaac ROS – NVIDIA’s reimplementation of ROS 2 packages accelerated by CUDA. Perception, manipulation, and navigation graphs run orders of magnitude faster on Jetson, letting developers reuse open‑source ROS code while gaining real‑time throughput.

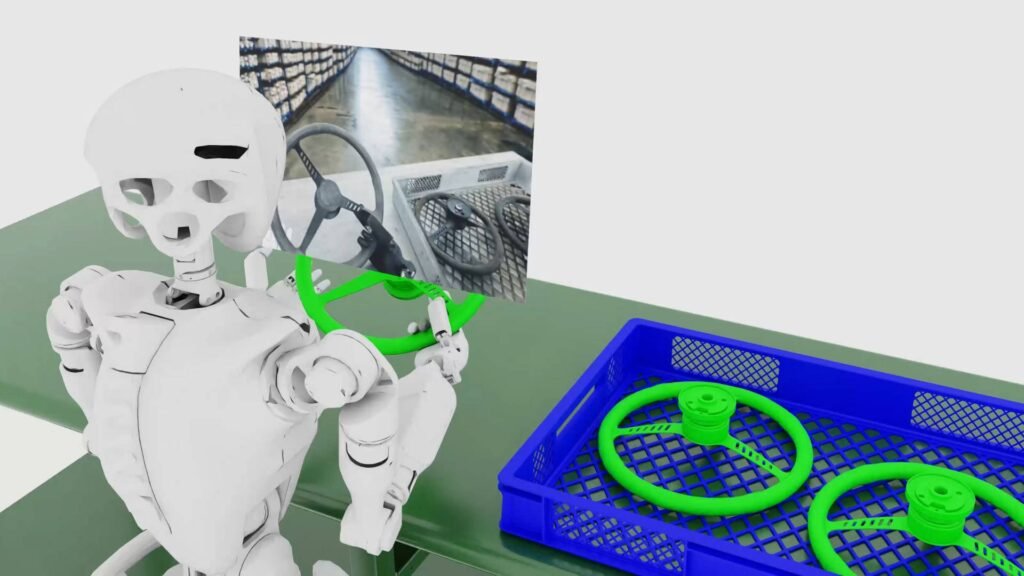

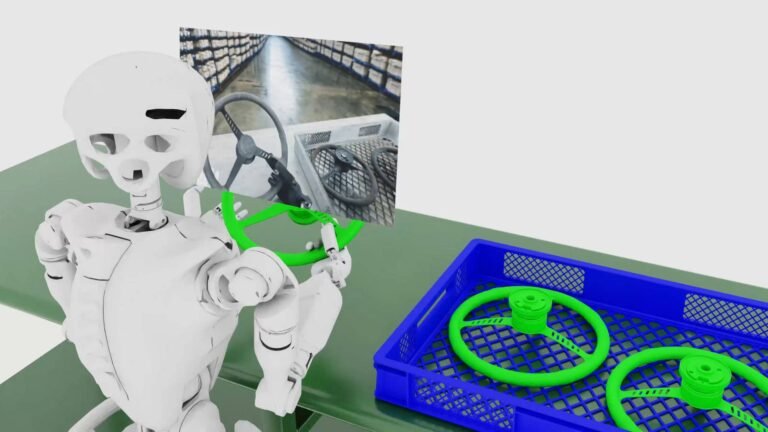

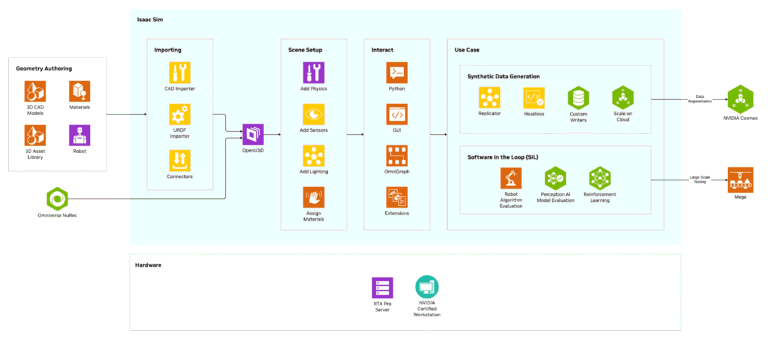

Isaac Sim – A high‑fidelity robotics simulation platform built on NVIDIA Omniverse. Engineers can synthetically generate sensor data, train reinforcement‑learning policies, and test edge cases (rare failure modes) in photorealistic digital twins before deploying to physical robots.

TAO Toolkit and Metropolis – Low‑code paths to customize vision and conversational AI models. Manufacturers can start from pretrained checkpoints and fine‑tune with proprietary defect images or domain‑specific terminology, then export optimized TensorRT engines for edge deployment.

CUDA, TensorRT, and cuDNN – The underlying performance primitives that guarantee deterministic latency, from camera shutter to actuator command, even in safety‑critical loops.

Manufacturing Use Cases

The resources emphasize concrete manufacturing scenarios where edge AI changes economics:

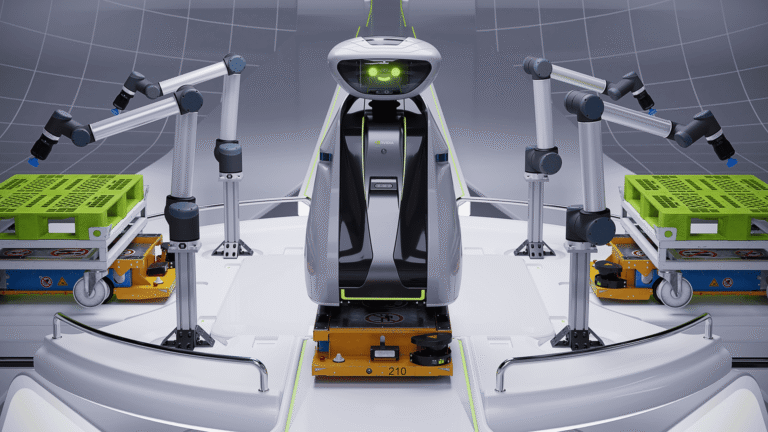

Autonomous logistics – AMRs from partners (MiR, Festo, Sarcos) use Jetson and Isaac ROS to navigate dynamic plant floors, reroute around human workers, and update maps in real time.

Visual inspection – Deep learning detects micro‑defects on metal, glass, and composite surfaces with higher accuracy than traditional machine vision, while continuously learning from operator feedback.

Predictive maintenance – Vibration, acoustic, and thermal time‑series are streamed to edge servers where transformer or LSTM models flag deviations before catastrophic failure, reducing unplanned downtime.

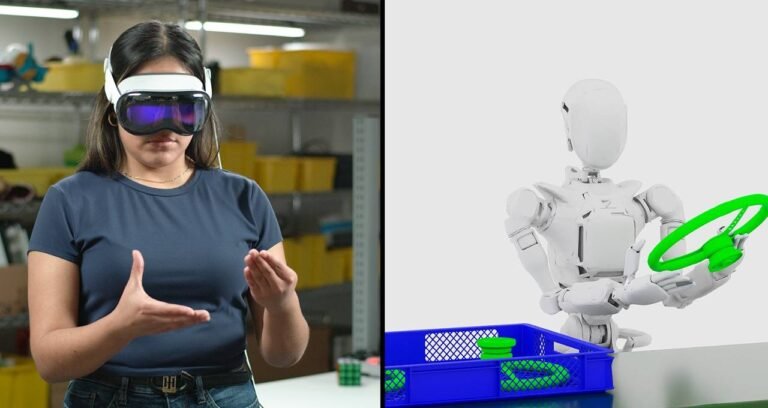

Worker safety and ergonomics – Spatial AI tracks pose and proximity to keep humans out of harm’s way and to certify that collaborative robots operate within force and speed limits.

Ecosystem and Partnerships

NVIDIA does not aim to build factory robots itself. Instead, the developer resources highlight a partner ecosystem: robot vendors integrate Jetson reference designs; system integrators build edge AI pipelines using Metropolis; cloud providers offer managed edge orchestration. Reference blueprints, Helm charts, and containerized microservices lower the barrier to mixing proprietary control logic with open‑source perception stacks.

Strategic Significance

By converging simulation, training, and runtime on a single architecture, NVIDIA addresses a historic friction point in industrial robotics: the gap between AI research and factory reliability. Developers can iterate rapidly in Omniverse, quantize and profile models with TensorRT, and deploy hardened containers to Jetson or IGX—maintaining a single codebase from lab to line.

In the broader arc of manufacturing, these resources accelerate the transition from automated to autonomous production. As supply chains demand flexibility and labor shortages persist, edge AI becomes the connective tissue between physical robots, human operators, and enterprise planning systems. NVIDIA’s developer portal is the gateway to that integration—turning raw silicon into shop‑floor intelligence, one container at a time.

Map

Sorry, no records were found. Please adjust your search criteria and try again.

Sorry, unable to load the Maps API.